Table of Contents:

From Meteoric Iron to Early Smelting: The Metallurgical Origins of Steel

Long before humans understood the chemistry of carbon and iron, they were already manipulating these elements by instinct and observation. The earliest iron objects we've recovered — dating back to roughly 3200 BCE in ancient Egypt — weren't smelted at all. They were shaped from meteoritic iron, a naturally occurring nickel-iron alloy that fell from the sky and required no extraction whatsoever. The famous iron beads of Gerzeh, analyzed in 2013 via electron microscopy, confirmed this extraterrestrial origin and revealed surprisingly sophisticated cold-hammering techniques for their era. Understanding how iron's earliest metallurgical transformations set the stage for everything that followed is essential before diving into the smelting revolution proper.

The leap from working with ready-made meteoritic iron to actually extracting iron from terrestrial ores was neither quick nor linear. It took millennia of accumulated pyrometallurgical knowledge, most of it developed through copper and bronze working, before smiths in Anatolia and the Near East began reducing iron oxides with charcoal at temperatures approaching 1200°C. This transition — commonly dated to around 1200–1000 BCE and often called the beginning of the Iron Age — didn't produce steel in any modern sense. The bloom iron that emerged from these early shaft furnaces was a spongy, heterogeneous mass of iron mixed with slag, requiring extensive mechanical working to achieve any structural integrity.

The Carburization Breakthrough: Accidental Steel

The critical insight that transforms iron into steel is carbon content — specifically between 0.2% and 2.1% by weight. Early smiths discovered, empirically rather than theoretically, that iron worked repeatedly in a charcoal fire absorbed carbon and became dramatically harder. This process, now called cementation or carburization, was almost certainly discovered by accident: a blade left too long in a hot forge, then quenched in water, emerging with properties no ordinary iron possessed. The Hittites appear to have controlled this process with some deliberacy as early as 1400 BCE, giving them a strategic military advantage that historians now recognize as contributing directly to their regional dominance.

Archaeological sites like Tel Beth-Shemesh in Israel and Luristan in western Iran have yielded iron artifacts with measurable carbon gradients — higher at the surface, lower toward the core — precisely what you'd expect from surface carburization. This is direct physical evidence that ancient smiths were differentially hardening iron objects, even without conceptual frameworks like the iron-carbon phase diagram to guide them. Examining these artifacts through a production-history lens reveals a level of process control that contradicts the common assumption that ancient metallurgy was purely trial and error.

Wootz, Pattern Welding, and Regional Divergence

By approximately 300 BCE, Indian smiths in the Tamil Nadu region had developed wootz steel — produced by sealing iron with carbon-rich organic material in clay crucibles and heating to full melt temperature. This produced a high-carbon steel (1.0–1.5% C) with characteristic carbide banding visible as the legendary Damascus pattern. Crucible steel technology represented a fundamentally different metallurgical path than the carburized bloom iron of the Mediterranean world, and its rediscovery was only achieved in the 19th century by European metallurgists. Simultaneously, Germanic and Celtic smiths were developing pattern-welded composites — alternating layers of high- and low-carbon iron forge-welded together to combine toughness with edge retention.

These parallel developments across Eurasia underscore a fundamental truth about early steel: it was not a single invention but a convergent solution arrived at independently by cultures with access to iron ore, fuel, and enough accumulated craft knowledge to push the material toward its limits. Tracing this convergence across centuries and continents reveals that the basic metallurgical challenges — balancing hardness against brittleness, scaling production, controlling carbon uptake — have remained essentially constant from the first cemented bloom to the modern basic oxygen furnace.

Wootz, Damascus, and Tamahagane: Ancient High-Performance Steels and Their Craft Traditions

Long before industrial metallurgy existed, three distinct craft traditions independently solved the fundamental challenge of producing high-carbon steel with superior mechanical properties. Wootz from the Indian subcontinent, Damascus steel from the Near East, and Tamahagane from Japan each represent peak achievements of pre-industrial materials science — and each relied on tightly guarded process knowledge that, in some cases, was lost entirely for centuries. Understanding these traditions is not merely an exercise in history; it reveals engineering principles that modern metallurgists continue to study and, in certain applications, replicate.

Wootz and Damascus: The Crucible Steel Lineage

Wootz steel originated in southern India, with documented production dating back to at least 300 BCE in regions around present-day Tamil Nadu and Sri Lanka. The process involved melting iron together with carbonaceous organic materials — typically wood chips and plant matter — inside sealed clay crucibles at temperatures approaching 1,400–1,500°C. This produced a hypereutectoid steel containing between 1.0% and 2.1% carbon, with a characteristic microstructure of carbide banding that gave finished blades their distinctive surface patterns. The mechanical result was exceptional: hardness values regularly exceeding 60 HRC combined with an unusual toughness that most high-carbon steels of comparable hardness cannot achieve. For anyone wanting to understand the crucible techniques and raw materials that made wootz possible, the layered interaction between iron, carbon, and trace vanadium and molybdenum impurities from ore sources turns out to be critical — elements the original smiths selected empirically without knowing their metallurgical function.

The connection between wootz and what became known as Damascus steel is direct: wootz ingots, primarily exported through the trading city of Damascus, were worked by Levantine and Persian smiths into finished blades. The forging process — controlled heating cycles, specific deformation sequences, and acid-etching protocols — revealed the internal carbide network as the iconic watered-silk surface pattern. How these blades were actually forged and finished explains why attempts to reproduce authentic Damascus steel failed repeatedly between roughly 1750 and the late 20th century: the properties depended on nanoscale carbide arrangements, not simply on pattern-welding techniques that modern "Damascus" blades often employ instead.

Tamahagane: Japan's Differential Hardening Tradition

Tamahagane, meaning "jewel steel," emerged from the Japanese tatara smelting tradition around the 8th century CE. The process used a clay furnace packed with iron sand (satetsu) and charcoal, operated over 72-hour continuous burns consuming up to 13 tons of charcoal to produce approximately 2 tons of raw steel bloom. What makes tamahagane metallurgically distinctive is the intentional production of a heterogeneous bloom containing zones with carbon content ranging from 0.1% to over 1.5%. Skilled smiths then sorted and selectively combined these zones:

- Hagane (high-carbon, ~0.6–1.5% C) formed the hard cutting edge

- Shingane (low-carbon, ~0.1–0.3% C) formed the tough inner core

- Kawagane (medium-carbon) wrapped the exterior for surface quality

The subsequent folding and welding process — sometimes exceeding 15 folding cycles — refined grain structure and distributed remaining slag inclusions uniformly. The full sequence of tamahagane production and blade construction demonstrates that Japanese smiths effectively engineered a functionally graded material, achieving edge hardness of 60–65 HRC while maintaining spine toughness below 40 HRC in the same blade — a combination modern composite manufacturing struggles to match economically at small scale.

All three traditions share a common insight that took Western metallurgy until the 19th century to formalize: carbon distribution and microstructural control, not raw material quality alone, determine steel performance. The craft knowledge embedded in these systems was empirical but precise, transmitted through apprenticeship over generations, and optimized for specific performance targets that remain relevant benchmarks today.

Medieval and Viking Steelmaking: Techniques, Traditions, and Metallurgical Knowledge

Between roughly 500 and 1500 CE, European and Scandinavian smiths developed steelmaking traditions that remain remarkable feats of empirical metallurgy. Without access to modern thermometers, spectrometers, or chemical analysis, these craftsmen learned to control carbon content, forge temperatures, and quench rates through sensory observation alone — reading flame color, listening to the ring of hammer on metal, and judging steel readiness by the precise shade of orange it emitted in a darkened smithy. The gap between their intuitive knowledge and what we now understand through material science is far smaller than most people assume.

The Bloomery Process and Carbon Control

Medieval steelmaking centered on the bloomery furnace, a relatively simple shaft construction of clay and stone reaching internal temperatures between 1100°C and 1200°C — hot enough to reduce iron ore but below iron's melting point of 1538°C. The output, a spongy mass called the bloom, contained heterogeneous mixtures of iron and steel with carbon concentrations ranging from near zero to approximately 1.5%. The smith's real skill lay in selective working: identifying and isolating the higher-carbon zones through repeated folding and forging, effectively concentrating usable steel from raw bloom material. Surviving artifacts from sites like Haithabu in northern Germany show that medieval smiths achieved consistent carbon distributions of 0.4–0.8% in cutting edges — well within the range we'd classify as functional medium-carbon steel today.

Anyone seriously interested in reconstructing these historical methods hands-on will quickly discover how physically demanding and skill-intensive this selective bloom working actually was. Modern experimental archaeology has confirmed that producing a single usable sword blank from bloom iron required between 8 and 15 hours of continuous smithing.

Viking Pattern-Welding and Metallurgical Sophistication

Pattern-welding — often misnamed "Damascus steel" in popular culture — represented the Viking Age's most sophisticated steel technology. Smiths twisted and folded rods of high- and low-carbon iron together, forge-welding them at temperatures exceeding 1100°C to create layered composites with hundreds of individual laminations. The Ulfberht swords, produced between approximately 800 and 1000 CE, represent a particularly advanced example: metallurgical analysis has revealed crucible steel with carbon content of around 1.0%, likely imported from Central Asia via Volga trade routes and then worked locally by Scandinavian smiths who understood its superior properties.

For a detailed breakdown of the specific ore sources, fuel management, and forge construction techniques the Norse employed, the metallurgical traditions behind Viking smithing deserve careful study — particularly the relationship between bog iron quality and the compensating techniques smiths developed to work around its high phosphorus content.

Key metallurgical practices shared across medieval European traditions included:

- Differential hardening: quenching only the cutting edge while leaving the spine soft, achieved by clay coating before quenching

- Carburization: packing wrought iron in charcoal within sealed clay vessels for 6–12 hours to absorb carbon at the surface

- Slag management: repeated folding to mechanically expel silicate inclusions that would otherwise create fracture points

- Tempering by color: drawing hardened steel back to a straw or blue oxide color (roughly 200–300°C) to reduce brittleness

What makes this period genuinely instructive for modern metallurgists is not nostalgia but precision: medieval smiths solved real engineering problems — toughness versus hardness trade-offs, weld integrity under cyclic loading — using feedback loops refined over generations of craft apprenticeship. Their solutions were empirically optimal given their constraints, and several, including differential heat treatment, remain in use in high-performance blade manufacturing today.

The Bessemer Process: How One Invention Triggered Industrial-Scale Steel Production

Before 1856, producing a single ton of steel required days of labor-intensive work and substantial fuel costs. The Bessemer process collapsed that timeline to roughly 20 minutes per heat, fundamentally rewiring the economics of steel manufacturing worldwide. Few technological breakthroughs in industrial history carry the same density of consequences: falling prices, railway expansion, urban construction booms, and the emergence of the first vertically integrated steel corporations all trace a direct line back to a single converter vessel.

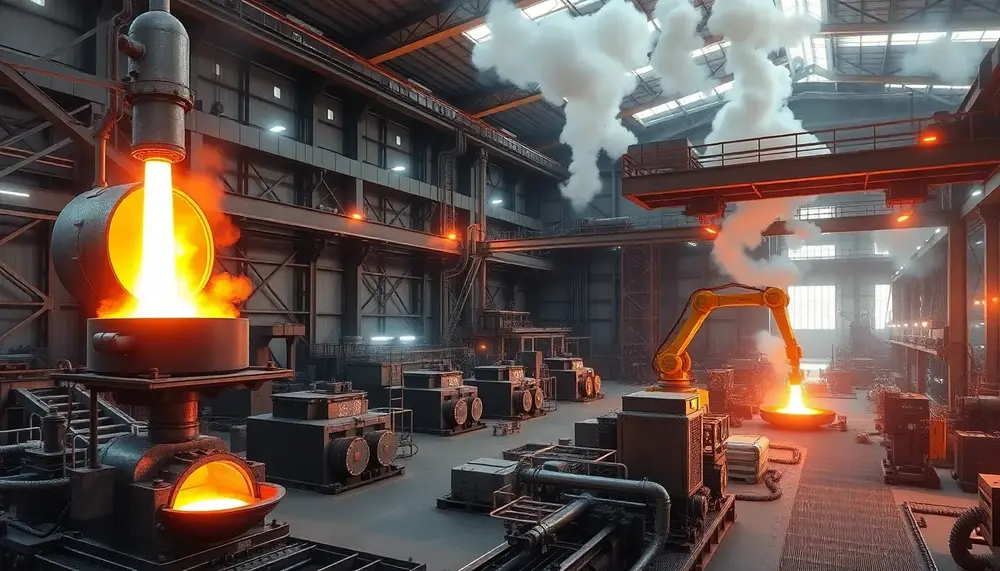

The Mechanics Behind the Revolution

Henry Bessemer — whose journey from independent inventor to industry disruptor is worth examining closely if you want to understand how a single engineer reshaped an entire manufacturing sector — filed his pivotal patent in 1856. The core insight was deceptively simple: instead of adding fuel to refine molten pig iron, force compressed air through it. The oxygen reacts exothermically with dissolved carbon, silicon, and manganese, generating enough internal heat to maintain the melt while burning off impurities at rates no furnace operator could manually achieve.

The converter itself was a pear-shaped vessel lined with refractory material, capable of processing 5 to 30 tons of molten iron per batch. Operators tilted it horizontally to charge the liquid iron, rotated it upright to initiate the air blast, then tilted it again to pour finished steel — a choreography that skilled crews could execute repeatedly across a single shift. By the early 1870s, British and American plants were running multiple converters in parallel, producing annual outputs that would have taken traditional cementation furnaces decades to match.

Scale Effects and Economic Impact

The numbers tell the story with unusual clarity. In Britain, steel output rose from approximately 50,000 tons in 1860 to over 3 million tons by 1890. In the United States, Andrew Carnegie's Edgar Thomson Steel Works — specifically engineered around Bessemer converters — drove the price of steel rails from $100 per ton in 1875 to under $12 by 1898. That price collapse made transcontinental rail networks financially viable and simultaneously priced wrought iron permanently out of structural applications.

Understanding how the converter evolved into a full mass production system requires looking beyond the hardware. The real innovation cascade included:

- Standardized rail profiles that allowed interchangeable components across national rail networks

- Integrated plant layouts that kept molten iron hot from blast furnace to converter, eliminating costly reheating cycles

- Shift-based labor organization replacing craft-guild structures with continuous, timed production runs

- Chemical quality control — rudimentary by modern standards but revolutionary at the time — to manage carbon content within commercial tolerances

The process did carry inherent limitations. It could not remove phosphorus from iron made with high-phosphorus European ores, a constraint that gave the later open-hearth furnace its commercial opening on the continent. The 1878 Thomas-Gilchrist modification addressed this with basic dolomite linings, but the fundamental Bessemer approach remained dominant in low-phosphorus ore regions through the early twentieth century. Those who want to trace how subsequent process improvements built on Bessemer's efficiency gains will find that nearly every advance in steelmaking throughput references this 1856 baseline.

Open Hearth Furnace vs. Bessemer Converter: Competing Technologies of the Industrial Era

By the 1870s, steelmakers faced a genuine strategic decision: commit to the explosive speed of the Bessemer converter or bet on the slower, more controllable open hearth furnace. Both technologies aimed to solve the same problem — converting pig iron into workable steel at industrial scale — but their operating principles, economics, and metallurgical outcomes diverged sharply. Understanding this rivalry means understanding why steel quality and production volume pulled in opposite directions for nearly half a century.

The Bessemer Advantage — and Its Fatal Flaw

Henry Bessemer's converter, patented in 1856, was a revolution in throughput. A single heat took as little as 20 minutes, and a well-run plant could process 150 to 200 tons per day per converter. Carnegie's Edgar Thomson Works in Pennsylvania demonstrated what that meant commercially: by 1890, the facility was producing steel rails at costs below $12 per ton, undercutting British competitors by a significant margin. The speed was intoxicating. However, the Bessemer process had a critical metallurgical constraint — it could not remove phosphorus from the charge. Ores with phosphorus content above roughly 0.1% produced brittle, unreliable steel. This locked American and British producers into expensive low-phosphorus ore sources and made vast European deposits, particularly in Lorraine and Luxembourg, effectively unusable until Sidney Gilchrist Thomas developed the basic Bessemer variant in 1878.

The converter also offered virtually no opportunity to adjust chemistry mid-process. Once the blow started, the metallurgist was largely a spectator. Carbon content control was notoriously imprecise, which led to variable mechanical properties across batches — a serious problem when railway companies began specifying minimum tensile strength requirements for structural steel in bridge construction contracts.

Why the Open Hearth Furnace Eventually Won

The regenerative heating principle behind the open hearth method — developed by Carl Wilhelm Siemens in the 1860s — fundamentally changed what a steelmaker could control. A heat lasting 6 to 10 hours sounds like a liability, but that extended processing window allowed operators to take multiple samples, adjust additions, and hit specific compositional targets. This mattered enormously as steel specifications became more demanding across the 1880s and 1890s. Anyone who wants to follow the detailed chemistry of how carbon and impurities were progressively removed in the open hearth will recognize why structural engineers began specifying it explicitly in procurement contracts by the early 1900s.

The open hearth furnace could also process a much wider scrap ratio — up to 50% cold scrap in the charge — which gave operators significant raw material flexibility and cost advantages when scrap markets were favorable. Furnace capacities scaled dramatically: units of 200 to 500 tons per heat became standard by 1910, dwarfing anything achievable with a Bessemer converter. By 1910, open hearth production in the United States had already surpassed Bessemer output, and by 1920 the converter's share had collapsed to below 25% of total domestic steel tonnage.

What this competition ultimately produced was a steelmaking paradigm that dominated global production for seven decades before being displaced by the basic oxygen furnace in the second half of the twentieth century. The key lesson embedded in this rivalry is that raw throughput speed never determines long-term technological dominance — process controllability and raw material flexibility do. Tracing how each successive steelmaking breakthrough displaced its predecessor reveals this pattern repeating with remarkable consistency across 150 years of industrial history.

- Bessemer converter heat time: 15–25 minutes; open hearth heat time: 6–10 hours

- Phosphorus tolerance: Bessemer limited to

FAQ on the Evolution of Steel Manufacturing

What is the Bessemer process and why is it significant?

The Bessemer process, patented in 1856 by Henry Bessemer, transformed steel production by introducing a method to produce steel economically in large quantities. It reduced steel production time significantly and laid the foundation for modern steel manufacturing.

What is wootz steel?

Wootz steel is a high-carbon steel originated from India around 300 BCE. It is made by melting iron in a crucible with carbon-rich materials, and is known for its distinctive patterns and superior mechanical properties. It is often associated with the legendary Damascus steel.

How did medieval smiths control carbon content?

Medieval smiths controlled carbon content through empirical methods, including adjusting forge temperatures and quenching techniques. They lacked modern equipment but relied on sensory observation to determine the readiness of the steel for shaping.

What are the differences between the Bessemer converter and the open hearth furnace?

The Bessemer converter is fast, producing steel in about 20 minutes, but cannot remove phosphorus effectively. In contrast, the open hearth furnace operates over several hours, allowing for better control of impurities and chemical composition, making it more adaptable for varying raw materials.

What role did steel play in the industrial revolution?

Steel was pivotal in the industrial revolution as it enabled the construction of railways, skyscrapers, and machinery. Its increased availability and lower cost due to advancements in manufacturing processes dramatically accelerated economic growth and urbanization during this period.